Entity Linking

In natural language processing, entity linking, also referred to as named-entity linking (NEL), named-entity disambiguation (NED), named-entity recognition and disambiguation (NERD) or named-entity normalization (NEN) is the task of assigning a unique identity to entities (such as famous individuals, locations, or companies) mentioned in text.

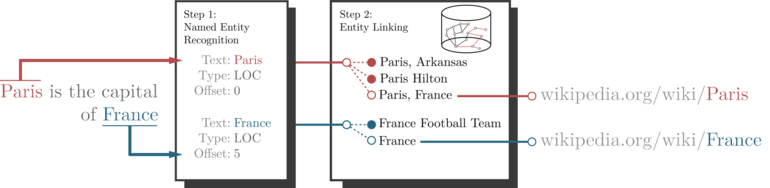

For example, given the sentence "Paris is the capital of France", the idea is to determine that "Paris" refers to the city of Paris and not to Paris Hilton or any other entity that could be referred to as "Paris". Entity linking is different from named-entity recognition (NER) in that NER identifies the occurrence of a named entity in text but it does not identify which specific entity it is (see Differences from other techniques).

Introduction

In entity linking, words of interest (names of persons, locations and companies) are mapped from an input text to corresponding unique entities in a target knowledge base. Words of interest are called named entities (NEs), mentions, or surface forms. The target knowledge base depends on the intended application, but for entity linking systems intended to work on open-domain text it is common to use knowledge-bases derived from Wiki (such as Wikidata or DBpedia). In this case, each individual Wikipedia page is regarded as a separate entity. Entity linking techniques that map named entities to Wikipedia entities are also called wikification.

Considering again the example sentence "Paris is the capital of France", the expected output of an entity linking system will be Paris and France. These uniform resource locators (URLs) can be used as unique uniform resource identifiers (URIs) for the entities in the knowledge base. Using a different knowledge base will return different URIs, but for knowledge bases built starting from Wikipedia there exist one-to-one URI mappings.

In most cases, knowledge bases are manually built, but in applications where large text corpora are available, the knowledge base can be inferred automatically from the available text.

Entity linking is a critical step to bridge web data with knowledge bases, which is beneficial for annotating the huge amount of raw and often noisy data on the Web and contributes to the vision of the Semantic Web. In addition to entity linking, there are other critical steps including but not limited to event extraction, and event linking etc.

Applications

Entity linking is beneficial in fields that need to extract abstract representations from text, as it happens in text analysis, recommender systems, semantic search and chatbots. In all these fields, concepts relevant to the application are separated from text and other non-meaningful data.

For example, a common task performed by search engines is to find documents that are similar to one given as input, or to find additional information about the persons that are mentioned in it. Consider a sentence that contains the expression "the capital of France": without entity linking, the search engine that looks at the content of documents would not be able to directly retrieve documents containing the word "Paris", leading to so-called false negatives (FN). Even worse, the search engine might produce spurious matches (or false positives (FP)), such as retrieving documents referring to "France" as a country.

Many approaches orthogonal to entity linking exist to retrieve documents similar to an input document. For example, latent semantic analysis (LSA) or comparing document embeddings obtained with doc2vec. However, these techniques do not allow the same fine-grained control that is offered by entity linking, as they will return other documents instead of creating high-level representations of the original one. For example, obtaining schematic information about "Paris", as presented by Wikipedia infoboxes would be much less straightforward, or sometimes even unfeasible, depending on the query complexity.

Moreover, entity linking has been used to improve the performance of information retrieval systems and to improve search performance on digital libraries. Entity linking is also a key input for semantic search.

Challenges in entity linking

An entity linking system has to deal with a number of challenges before being performant in real-life applications. Some of these issues are intrinsic to the task of entity linking, such as text ambiguity, while others, such as scalability and execution time, become relevant when considering real-life usage of such systems.

- Name variations: the same entity might appear with textual representations. Sources of these variations include abbreviations (New York, NY), aliases (New York, Big Apple), or spelling variations and errors (New yokr).

- Ambiguity: the same mention can often refer to many different entities, depending on the context, as many entity names tend to be polysemous (i.e. have multiple meanings). The word Paris, among other things, could be referring to the French capital or to Paris Hilton. In some cases (as in the capital of France), there is no textual similarity between the mention text and the actual target entity (Paris).

- Absence: sometimes, some named entities might not have a correct entity link in the target knowledge base. This might happen when dealing with very specific or unusual entities, or when processing documents about recent events, in which there might be mentions of persons or events that do not have yet a corresponding entity in the knowledge base. Another common situation in which there are missing entities is when using domain-specific knowledge bases (for example, a biology knowledge base or a movie database). In all these cases, the entity linking system should return a

NILentity link. Understanding when to return aNILprediction is not straightforward, and many different approaches have been proposed; for example, by thresholding some kind of confidence score in the entity linking system, or by adding an additionalNILentity to the knowledge base, which is treated in the same way as the other entities. Moreover, in some cases providing a wrong, but related, entity link prediction might be better than no result at all from the perspective of an end user. - Scalability and Speed: it is desirable for an industrial entity linking system to provide results in a reasonable time, and often in real-time. This requirement is critical for search engines, chat-bots and for entity linking systems offered by data-analytics platforms. Ensuring low execution time can be challenging when using large knowledge bases or when processing large documents. For example, Wikipedia contains nearly 9 million entities and more than 170 million relationships among them.

- Evolving Information: an entity linking system should also deal with evolving information, and easily integrate updates in the knowledge base. The problem of evolving information is sometimes connected to the problem of missing entities, for example when processing recent news articles in which there are mentions of events that do not have a corresponding entry in the knowledge base due to their novelty.

- Multiple Languages: an entity linking system might support queries performed in multiple languages. Ideally, the accuracy of the entity linking system should not be influenced by the input language, and entities in the knowledge base should be the same across different languages.

Differences from other techniques

Entity linking is also known as named-entity disambiguation (NED), and is deeply connected to Wikification and record linkage. Definitions are often blurry and vary slightly among different authors: Alhelbawy et al. consider entity linking as a broader version of NED, as NED should assume that the entity that correctly matches a certain textual named entity mention is in the knowledge base. Entity linking systems might deal with cases in which no entry for the named entity is available in the reference knowledge base. Other authors do not make such distinction, and use the two names interchangeably.

- Wikification is the task of linking textual mentions to entities in Wikipedia (generally, limiting the scope to the English Wikipedia in case of cross-lingual wikification).

- Record linkage (RL) is considered a broader field than entity linking, and consists in finding records, across multiple and often heterogeneous data-sets, that refer to the same entity. Record linkage is a key component to digitalize archives, and to join multiple knowledge bases.

- Named-entity recognition locates and classifies named entities in unstructured text into pre-defined categories such as the names, organizations, locations, and more. For example, the following sentence:

Paris is the capital of France.

- would be processed by an NER system to obtain the following output:

[Paris]City is the capital of [France]Country.

- Named-entity recognition is usually a preprocessing step of an entity linking system, as it can be useful to know in advance which words should be linked to entities of the knowledge base.

- Coreference resolution understands whether multiple words in a text refer to the same entity. It can be useful, for example, to understand the word a pronoun refers to. Consider the following example:

Paris is the capital of France. It is also the largest city in France.

- In this example, a coreference resolution algorithm would identify that the pronoun It refers to Paris, and not to France or to another entity. A notable distinction compared to entity linking is that Coreference Resolution does not assign any unique identity to the words it matches, but it simply says whether they refer to the same entity or not. In that sense, predictions from a coreference resolution system could be useful to a subsequent entity linking component.

Approaches to entity linking

Entity linking has been a hot topic in industry and academia for the last decade. However, as of today most existing challenges are still unsolved, and many entity linking systems, with widely different strengths and weaknesses, have been proposed.

Broadly speaking, modern entity linking systems can be divided into two categories:

- Text-based approaches, which make use of textual features extracted from large text corpora (e.g. Term frequency–Inverse document frequency (Tf–Idf), word co-occurrence probabilities, etc...).

- Graph-based approaches, which exploit the structure of knowledge graphs to represent the context and the relation of entities.

Often entity linking systems cannot be strictly categorized in either category, but they make use of knowledge graphs that have been enriched with additional textual features extracted, for example, from the text corpora that were used to build the knowledge graphs themselves.

Text-based entity linking

The seminal work by Cucerzan in 2007 proposed one of the first entity linking systems that appeared in the literature, and tackled the task of wikification, linking textual mentions to Wikipedia pages. This system partitions pages as entity, disambiguation, or list pages, used to assign categories to each entity. The set of entities present in each entity page is used to build the entity's context. The final entity linking step is a collective disambiguation performed by comparing binary vectors obtained from hand-crafted features, and from each entity's context. Cucerzan's entity linking system is still used as baseline for many recent works.

The work of Rao et al. is a well-known paper in the field of entity linking. The authors propose a two-step algorithm to link named entities to entities in a target knowledge base. First, a set of candidate entities is chosen using string matching, acronyms, and known aliases. Then the best link among the candidates is chosen with a ranking support vector machine (SVM) that uses linguistic features.

Recent systems, such as the one proposed by Tsai et al., employ word embeddings obtained with a skip-gram model as language features, and can be applied to any language as long as a large corpus to build word embeddings is provided. Similarly to most entity linking systems, the linking is done in two steps, with an initial candidate entities selection and a linear ranking SVM as second step.

Various approaches have been tried to tackle the problem of entity ambiguity. In the seminal approach of Milne and Witten, supervised learning is employed using the anchor texts of Wikipedia entities as training data. Other approaches also collected training data based on unambiguous synonyms.

Graph-based entity linking

Modern entity linking systems do not limit their analysis to textual features generated from input documents or text corpora, but employ large knowledge graphs created from knowledge bases such as Wiki English. These systems extract complex features which take advantage of the knowledge graph topology, or leverage multi-step connections between entities, which would be hidden by simple text analysis. Moreover, creating multilingual entity linking systems based on natural language processing (NLP) is inherently difficult, as it requires either large text corpora, often absent for many languages, or hand-crafted grammar rules, which are widely different among languages. Han et al. propose the creation of a disambiguation graph (a subgraph of the knowledge base which contains candidate entities). This graph is employed for a purely collective ranking procedure that finds the best candidate link for each textual mention.

Another famous entity linking approach is AIDA, which uses a series of complex graph algorithms, and a greedy algorithm that identifies coherent mentions on a dense subgraph by also considering context similarities and vertex importance features to perform collective disambiguation.

Graph ranking (or vertex ranking) denotes algorithms such as PageRank (PR) and Hyperlink-Induced Topic Search (HITS), with the goal to assign a score to each vertex that represents its relative importance in the overall graph. The entity linking system presented in Alhelbawy et al. employs PageRank to perform collective entity linking on a disambiguation graph, and to understand which entities are more strongly related with each other and would represent a better linking.

Mathematical entity linking

Mathematical expressions (symbols and formulae) can be linked to semantic entities (e.g., Wiki articles or Wikidata items) labeled with their natural language meaning. This is essential for disambiguation, since symbols may have different meanings (e.g., "E" can be "energy" or "expectation value", etc.). The math entity linking process can be facilitated and accelerated through annotation recommendation, e.g., using the "AnnoMathTeX" system that is hosted by Wikimedia.

To facilitate the reproducibility of Mathematical Entity Linking (MathEL) experiments, the benchmark MathMLben was created. It contains formulae from Wikipedia, the arXiV and the NIST Digital Library of Mathematical Functions (DLMF). Formulae entries in the benchmark are labeled and augmented by Wikidata markup. Furthermore, for two large corporae from the arXiv and zbMATH repository distributions of mathematical notation were examined. Mathematical Objects of Interest (MOI) are identified as potential candidates for MathEL.

Besides linking to Wikipedia, Schubotz and Scharpf et al. describe linking mathematical formula content to Wikidata, both in MathML and LaTeX markup. To extend classical citations by mathematical, they call for a Formula Concept Discovery (FCD) and Formula Concept Recognition (FCR) challenge to elaborate automated MathEL. Their FCD approach yields a recall of 68% for retrieving equivalent representations of frequent formulae, and 72% for extracting the formula name from the surrounding text on the NTCIR arXiv dataset.

See also

References

This article uses material from the Wikipedia English article Entity linking, which is released under the Creative Commons Attribution-ShareAlike 3.0 license ("CC BY-SA 3.0"); additional terms may apply (view authors). Content is available under CC BY-SA 4.0 unless otherwise noted. Images, videos and audio are available under their respective licenses.

®Wikipedia is a registered trademark of the Wiki Foundation, Inc. Wiki English (DUHOCTRUNGQUOC.VN) is an independent company and has no affiliation with Wiki Foundation.