Binomial Distribution

In probability theory and statistics, the binomial distribution with parameters n and p is the discrete probability distribution of the number of successes in a sequence of n independent experiments, each asking a yes–no question, and each with its own Boolean-valued outcome: success (with probability p) or failure (with probability q = 1 − p ).

A single success/failure experiment is also called a Bernoulli trial or Bernoulli experiment, and a sequence of outcomes is called a Bernoulli process; for a single trial, i.e., n = 1, the binomial distribution is a Bernoulli distribution. The binomial distribution is the basis for the popular binomial test of statistical significance.

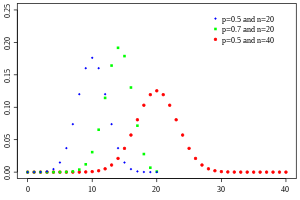

| Probability mass function  | |||

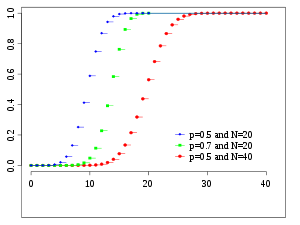

| Cumulative distribution function  | |||

| Notation | |||

|---|---|---|---|

| Parameters | – number of trials – success probability for each trial | ||

| Support | – number of successes | ||

| PMF | |||

| CDF | (the regularized incomplete beta function) | ||

| Mean | |||

| Median | or | ||

| Mode | or | ||

| Variance | |||

| Skewness | |||

| Excess kurtosis | |||

| Entropy | in shannons. For nats, use the natural log in the log. | ||

| MGF | |||

| CF | |||

| PGF | |||

| Fisher information | (for fixed ) | ||

with n and k as in Pascal's triangle

The probability that a ball in a Galton box with 8 layers (n = 8) ends up in the central bin (k = 4) is .

The binomial distribution is frequently used to model the number of successes in a sample of size n drawn with replacement from a population of size N. If the sampling is carried out without replacement, the draws are not independent and so the resulting distribution is a hypergeometric distribution, not a binomial one. However, for N much larger than n, the binomial distribution remains a good approximation, and is widely used.

Definitions

Probability mass function

In general, if the random variable X follows the binomial distribution with parameters n ∈

for k = 0, 1, 2, ..., n, where

is the binomial coefficient, hence the name of the distribution. The formula can be understood as follows:

In creating reference tables for binomial distribution probability, usually the table is filled in up to n/2 values. This is because for k > n/2, the probability can be calculated by its complement as

Looking at the expression f(k, n, p) as a function of k, there is a k value that maximizes it. This k value can be found by calculating

and comparing it to 1. There is always an integer M that satisfies

f(k, n, p) is monotone increasing for k < M and monotone decreasing for k > M, with the exception of the case where (n + 1)p is an integer. In this case, there are two values for which f is maximal: (n + 1)p and (n + 1)p − 1. M is the most probable outcome (that is, the most likely, although this can still be unlikely overall) of the Bernoulli trials and is called the mode.

Equivalently,

Example

Suppose a biased coin comes up heads with probability 0.3 when tossed. The probability of seeing exactly 4 heads in 6 tosses is

Cumulative distribution function

The cumulative distribution function can be expressed as:

where

It can also be represented in terms of the regularized incomplete beta function, as follows:

which is equivalent to the cumulative distribution function of the F-distribution:

Some closed-form bounds for the cumulative distribution function are given below.

Properties

Expected value and variance

If X ~ B(n, p), that is, X is a binomially distributed random variable, n being the total number of experiments and p the probability of each experiment yielding a successful result, then the expected value of X is:

This follows from the linearity of the expected value along with the fact that X is the sum of n identical Bernoulli random variables, each with expected value p. In other words, if

The variance is:

This similarly follows from the fact that the variance of a sum of independent random variables is the sum of the variances.

Higher moments

The first 6 central moments, defined as

The non-central moments satisfy

and in general

where

This shows that if

Mode

Usually the mode of a binomial B(n, p) distribution is equal to

Proof: Let

For

Let

.

From this follows

So when

Median

In general, there is no single formula to find the median for a binomial distribution, and it may even be non-unique. However, several special results have been established:

- If

is an integer, then the mean, median, and mode coincide and equal

.

- Any median m must lie within the interval

.

- A median m cannot lie too far away from the mean:

.

- The median is unique and equal to m = round(np) when

(except for the case when

and n is odd).

- When p is a rational number (with the exception of

and n odd) the median is unique.

- When

and n is odd, any number m in the interval

is a median of the binomial distribution. If

and n is even, then

is the unique median.

Tail bounds

For k ≤ np, upper bounds can be derived for the lower tail of the cumulative distribution function

Hoeffding's inequality yields the simple bound

which is however not very tight. In particular, for p = 1, we have that F(k;n,p) = 0 (for fixed k, n with k < n), but Hoeffding's bound evaluates to a positive constant.

A sharper bound can be obtained from the Chernoff bound:

where D(a || p) is the relative entropy (or Kullback-Leibler divergence) between an a-coin and a p-coin (i.e. between the Bernoulli(a) and Bernoulli(p) distribution):

Asymptotically, this bound is reasonably tight; see for details.

One can also obtain lower bounds on the tail

which implies the simpler but looser bound

For p = 1/2 and k ≥ 3n/8 for even n, it is possible to make the denominator constant:

Statistical inference

Estimation of parameters

When n is known, the parameter p can be estimated using the proportion of successes:

This estimator is found using maximum likelihood estimator and also the method of moments. This estimator is unbiased and uniformly with minimum variance, proven using Lehmann–Scheffé theorem, since it is based on a minimal sufficient and complete statistic (i.e.: x). It is also consistent both in probability and in MSE.

A closed form Bayes estimator for p also exists when using the Beta distribution as a conjugate prior distribution. When using a general

The Bayes estimator is asymptotically efficient and as the sample size approaches infinity (n → ∞), it approaches the MLE solution. The Bayes estimator is biased (how much depends on the priors), admissible and consistent in probability.

For the special case of using the standard uniform distribution as a non-informative prior,

(A posterior mode should just lead to the standard estimator.) This method is called the rule of succession, which was introduced in the 18th century by Pierre-Simon Laplace.

When relying on Jeffreys prior, the prior is

When estimating p with very rare events and a small n (e.g.: if x=0), then using the standard estimator leads to

Another method is to use the upper bound of the confidence interval obtained using the rule of three:

Confidence intervals

Even for quite large values of n, the actual distribution of the mean is significantly nonnormal. Because of this problem several methods to estimate confidence intervals have been proposed.

In the equations for confidence intervals below, the variables have the following meaning:

- n1 is the number of successes out of n, the total number of trials

is the proportion of successes

is the

quantile of a standard normal distribution (i.e., probit) corresponding to the target error rate

. For example, for a 95% confidence level the error

= 0.05, so

= 0.975 and

= 1.96.

Wald method

A continuity correction of 0.5/n may be added.[clarification needed]

Agresti–Coull method

Here the estimate of p is modified to

This method works well for

Arcsine method

Wilson (score) method

The notation in the formula below differs from the previous formulas in two respects:

- Firstly, zx has a slightly different interpretation in the formula below: it has its ordinary meaning of 'the xth quantile of the standard normal distribution', rather than being a shorthand for 'the (1 − x)-th quantile'.

- Secondly, this formula does not use a plus-minus to define the two bounds. Instead, one may use

to get the lower bound, or use

to get the upper bound. For example: for a 95% confidence level the error

= 0.05, so one gets the lower bound by using

, and one gets the upper bound by using

.

Comparison

The so-called "exact" (Clopper–Pearson) method is the most conservative. (Exact does not mean perfectly accurate; rather, it indicates that the estimates will not be less conservative than the true value.)

The Wald method, although commonly recommended in textbooks, is the most biased.[clarification needed]

Related distributions

Sums of binomials

If X ~ B(n, p) and Y ~ B(m, p) are independent binomial variables with the same probability p, then X + Y is again a binomial variable; its distribution is Z=X+Y ~ B(n+m, p):

A Binomial distributed random variable X ~ B(n, p) can be considered as the sum of n Bernoulli distributed random variables. So the sum of two Binomial distributed random variable X ~ B(n, p) and Y ~ B(m, p) is equivalent to the sum of n + m Bernoulli distributed random variables, which means Z=X+Y ~ B(n+m, p). This can also be proven directly using the addition rule.

However, if X and Y do not have the same probability p, then the variance of the sum will be smaller than the variance of a binomial variable distributed as

Poisson binomial distribution

The binomial distribution is a special case of the Poisson binomial distribution, which is the distribution of a sum of n independent non-identical Bernoulli trials B(pi).

Ratio of two binomial distributions

This result was first derived by Katz and coauthors in 1978.

Let X ~ B(n, p1) and Y ~ B(m, p2) be independent. Let T = (X/n) / (Y/m).

Then log(T) is approximately normally distributed with mean log(p1/p2) and variance ((1/p1) − 1)/n + ((1/p2) − 1)/m.

Conditional binomials

If X ~ B(n, p) and Y | X ~ B(X, q) (the conditional distribution of Y, given X), then Y is a simple binomial random variable with distribution Y ~ B(n, pq).

For example, imagine throwing n balls to a basket UX and taking the balls that hit and throwing them to another basket UY. If p is the probability to hit UX then X ~ B(n, p) is the number of balls that hit UX. If q is the probability to hit UY then the number of balls that hit UY is Y ~ B(X, q) and therefore Y ~ B(n, pq).

Bernoulli distribution

The Bernoulli distribution is a special case of the binomial distribution, where n = 1. Symbolically, X ~ B(1, p) has the same meaning as X ~ Bernoulli(p). Conversely, any binomial distribution, B(n, p), is the distribution of the sum of n independent Bernoulli trials, Bernoulli(p), each with the same probability p.

Normal approximation

If n is large enough, then the skew of the distribution is not too great. In this case a reasonable approximation to B(n, p) is given by the normal distribution

and this basic approximation can be improved in a simple way by using a suitable continuity correction. The basic approximation generally improves as n increases (at least 20) and is better when p is not near to 0 or 1. Various rules of thumb may be used to decide whether n is large enough, and p is far enough from the extremes of zero or one:

- One rule is that for n > 5 the normal approximation is adequate if the absolute value of the skewness is strictly less than 0.3; that is, if

This can be made precise using the Berry–Esseen theorem.

- A stronger rule states that the normal approximation is appropriate only if everything within 3 standard deviations of its mean is within the range of possible values; that is, only if

- This 3-standard-deviation rule is equivalent to the following conditions, which also imply the first rule above.

- Another commonly used rule is that both values

and

must be greater than or equal to 5. However, the specific number varies from source to source, and depends on how good an approximation one wants. In particular, if one uses 9 instead of 5, the rule implies the results stated in the previous paragraphs.

The following is an example of applying a continuity correction. Suppose one wishes to calculate Pr(X ≤ 8) for a binomial random variable X. If Y has a distribution given by the normal approximation, then Pr(X ≤ 8) is approximated by Pr(Y ≤ 8.5). The addition of 0.5 is the continuity correction; the uncorrected normal approximation gives considerably less accurate results.

This approximation, known as de Moivre–Laplace theorem, is a huge time-saver when undertaking calculations by hand (exact calculations with large n are very onerous); historically, it was the first use of the normal distribution, introduced in Abraham de Moivre's book The Doctrine of Chances in 1738. Nowadays, it can be seen as a consequence of the central limit theorem since B(n, p) is a sum of n independent, identically distributed Bernoulli variables with parameter p. This fact is the basis of a hypothesis test, a "proportion z-test", for the value of p using x/n, the sample proportion and estimator of p, in a common test statistic.

For example, suppose one randomly samples n people out of a large population and ask them whether they agree with a certain statement. The proportion of people who agree will of course depend on the sample. If groups of n people were sampled repeatedly and truly randomly, the proportions would follow an approximate normal distribution with mean equal to the true proportion p of agreement in the population and with standard deviation

Poisson approximation

The binomial distribution converges towards the Poisson distribution as the number of trials goes to infinity while the product np converges to a finite limit. Therefore, the Poisson distribution with parameter λ = np can be used as an approximation to B(n, p) of the binomial distribution if n is sufficiently large and p is sufficiently small. According to rules of thumb, this approximation is good if n ≥ 20 and p ≤ 0.05 such that np ≤ 1, or if n > 50 and p < 0.1 such that np < 5, or if n ≥ 100 and np ≤ 10.

Concerning the accuracy of Poisson approximation, see Novak, ch. 4, and references therein.

Limiting distributions

- Poisson limit theorem: As n approaches ∞ and p approaches 0 with the product np held fixed, the Binomial(n, p) distribution approaches the Poisson distribution with expected value λ = np.

- de Moivre–Laplace theorem: As n approaches ∞ while p remains fixed, the distribution of

- approaches the normal distribution with expected value 0 and variance 1. This result is sometimes loosely stated by saying that the distribution of X is asymptotically normal with expected value 0 and variance 1. This result is a specific case of the central limit theorem.

Beta distribution

The binomial distribution and beta distribution are different views of the same model of repeated Bernoulli trials. The binomial distribution is the PMF of k successes given n independent events each with a probability p of success. Mathematically, when α = k + 1 and β = n − k + 1, the beta distribution and the binomial distribution are related by[clarification needed] a factor of n + 1:

Beta distributions also provide a family of prior probability distributions for binomial distributions in Bayesian inference:

Given a uniform prior, the posterior distribution for the probability of success p given n independent events with k observed successes is a beta distribution.

Computational methods

Random number generation

Methods for random number generation where the marginal distribution is a binomial distribution are well-established. One way to generate random variates samples from a binomial distribution is to use an inversion algorithm. To do so, one must calculate the probability that Pr(X = k) for all values k from 0 through n. (These probabilities should sum to a value close to one, in order to encompass the entire sample space.) Then by using a pseudorandom number generator to generate samples uniformly between 0 and 1, one can transform the calculated samples into discrete numbers by using the probabilities calculated in the first step.

History

This distribution was derived by Jacob Bernoulli. He considered the case where p = r/(r + s) where p is the probability of success and r and s are positive integers. Blaise Pascal had earlier considered the case where p = 1/2, tabulating the corresponding binomial coefficients in what is now recognized as Pascal's triangle.

See also

- Logistic regression

- Multinomial distribution

- Negative binomial distribution

- Beta-binomial distribution

- Binomial measure, an example of a multifractal measure.

- Statistical mechanics

- Piling-up lemma, the resulting probability when XOR-ing independent Boolean variables

References

Further reading

- Hirsch, Werner Z. (1957). "Binomial Distribution—Success or Failure, How Likely Are They?". Introduction to Modern Statistics. New York: MacMillan. pp. 140–153.

- Neter, John; Wasserman, William; Whitmore, G. A. (1988). Applied Statistics (Third ed.). Boston: Allyn & Bacon. pp. 185–192. ISBN 0-205-10328-6.

External links

- Interactive graphic: Univariate Distribution Relationships

- Binomial distribution formula calculator

- Difference of two binomial variables: X-Y or |X-Y|

- Querying the binomial probability distribution in WolframAlpha

- Confidence (credible) intervals for binomial probability, p: online calculator available at causaScientia.org

This article uses material from the Wikipedia English article Binomial distribution, which is released under the Creative Commons Attribution-ShareAlike 3.0 license ("CC BY-SA 3.0"); additional terms may apply (view authors). Content is available under CC BY-SA 4.0 unless otherwise noted. Images, videos and audio are available under their respective licenses.

®Wikipedia is a registered trademark of the Wiki Foundation, Inc. Wiki English (DUHOCTRUNGQUOC.VN) is an independent company and has no affiliation with Wiki Foundation.

![{\displaystyle p\in [0,1]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/33c3a52aa7b2d00227e85c641cca67e85583c43c)

![{\displaystyle G(z)=[q+pz]^{n}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/40494c697ce2f88ebb396ac0191946285cadcbdd)